Statistical analysis is the process of using data to describe what happened, estimate what is likely to be true, test ideas, measure relationships, and support decisions under uncertainty. A dataset can contain ages, test scores, survey answers, or any other measured information. Statistical analysis asks what those data can reasonably tell us, what they cannot tell us, and how much uncertainty remains.

This article explains what statistical analysis is, how it differs from statistical methods, the basic concepts behind it, the main types of statistical analysis, and how to interpret results responsibly.

What is statistical analysis?

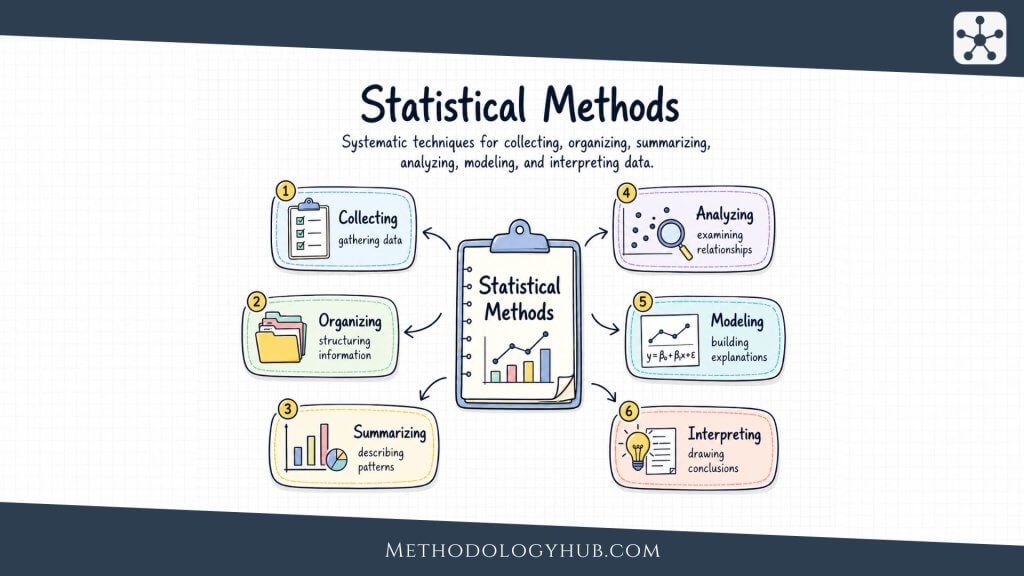

Statistical analysis is the use of statistical methods to collect, organize, summarize, model, and interpret research data. It helps answer questions such as: What does this dataset look like? Is this pattern large enough to matter? Could this result be due to random variation? Which variables move together? Which factors predict an outcome? How uncertain is this estimate?

A useful way to think about statistical analysis is that it sits between raw data and judgment. Raw data rarely speaks clearly by itself. A spreadsheet may contain thousands of rows, but the rows do not automatically explain whether a treatment helped, whether an intervention worked, whether a process changed, or whether a forecast is reliable. Statistical analysis gives structure to that judgment.

That structure matters because data can be misleading when it is handled casually. Averages can hide spread. Small samples can make noise look like a pattern. A strong correlation can tempt people into causal claims that the data does not support. A chart can make a weak effect look dramatic. A model can look precise while resting on weak assumptions. Good statistical analysis does not remove uncertainty. It makes uncertainty visible enough to reason with.

Statistical analysis definition

Statistical analysis is the process of applying statistical techniques to data in order to describe patterns, estimate quantities, test hypotheses, model relationships, and support decisions. It includes both simple summaries, such as averages and percentages, and more complex work, such as regression modeling, analysis of variance, survival analysis, causal inference, and simulation.

The definition is broad because the work is broad. A researcher comparing two clinical treatments, a public health researcher studying disease risk, a teacher reviewing test results, a city planner looking at traffic counts, and an engineering researcher estimating failure risk may all use statistical analysis. Their data, goals, and methods differ, but they share one problem: they need to make sense of variation.

Statistical analysis vs statistical methods

Statistical analysis and statistical methods are closely related, but they are not the same thing. Statistical methods are the specific techniques used to work with research data. Examples include descriptive statistics, confidence intervals, hypothesis tests, correlation, regression, ANOVA, logistic regression, time series models, survival analysis, and Monte Carlo simulation.

Statistical analysis is the broader process of choosing, applying, checking, and interpreting those methods. A simple way to separate them is this: statistical methods are the tools, while statistical analysis is the work of using those tools to answer a question.

For example, a t-test is a statistical method. Using that t-test to compare two groups, check whether its assumptions are reasonable, interpret the p-value and confidence interval, and explain the result in context is statistical analysis.

What is the goal of statistical analysis?

Statistical analysis usually has one or more practical goals. Sometimes the goal is description. You want to know what the data looks like before making any larger claim. Sometimes the goal is inference. You have a sample and want to say something careful about a wider population. Sometimes the goal is prediction. You want to estimate future or unknown outcomes. Sometimes the goal is decision support. You want to compare choices while accepting that the future is uncertain.

These aims often overlap. A study may begin with descriptive statistics, move into hypothesis testing, use regression to adjust for other variables, and end by reporting effect sizes and confidence intervals. A risk model may use probability distributions, simulation, sensitivity analysis, and scenario analysis together. Statistical analysis is rarely one button. It is usually a sequence of judgments.

Fundamentals of statistical analysis

Statistical analysis becomes much easier when the foundation is clear. Many errors happen before any test is chosen. The wrong approach is often selected because the data type was misunderstood, the measurement scale was ignored, the distribution was never checked, or the sample was treated as if it perfectly represented the population. Good analysis starts with the shape of the problem.

Data and variables

A variable is any characteristic that can take different values. Age, income, blood pressure, disease category, education level, delivery time, survey rating, treatment group, and pass or fail status are all variables. Before choosing a statistical method, you need to know what kind of variables you have.

Categorical data places observations into groups. Examples include gender, country, diagnosis type, disease status, and survey categories. Categorical variables can be nominal, where categories have no natural order, or ordinal, where the categories have a rank. Satisfaction ratings from very dissatisfied to very satisfied are ordinal because the order means something, even if the distance between categories is not exact.

Numerical data records quantities. Examples include height, test score, temperature, reaction time, blood pressure, and time to completion. Numerical variables may be discrete or continuous. Discrete data is counted in separate values, such as number of errors, number of children, or number of hospital visits. Continuous data can take values along a scale, such as weight, distance, time, or concentration.

The level of measurement also changes what you can safely do. Nominal data supports counts and proportions. Ordinal data supports ranking, but averages can become awkward if the distances between levels are not meaningful. Interval data has meaningful distances but no true zero, such as temperature in Celsius. Ratio data has meaningful distances and a true zero, such as weight, duration, or distance.

Data distributions

A distribution shows how values are spread. It tells you whether observations cluster near the center, stretch toward one side, have several peaks, include extreme values, or follow a shape close to a normal curve. Looking at the distribution is one of the first acts of statistical analysis because many methods depend on assumptions about the data.

The normal distribution is a symmetric bell-shaped distribution. Many basic statistical methods are taught with it because it has convenient mathematical properties and appears often enough to be useful. Still, real data is not automatically normal. Income, waiting time, hospital length of stay, citation counts, and environmental exposure measurements are often skewed. Count data may follow distributions such as binomial or Poisson. Time-to-event data may need survival methods rather than ordinary averages.

Skewness describes asymmetry. A right-skewed distribution has a long tail to the right, often caused by a few very large values. A left-skewed distribution has a long tail to the left. Kurtosis describes tail behavior and the presence of unusually extreme values. These ideas matter because the mean, standard deviation, and many model assumptions can be sensitive to extreme observations.

Descriptive statistics

Descriptive statistics summarize data without trying to generalize beyond it. They are often the first layer of analysis because they make the dataset readable. Instead of staring at every row, you calculate measures that describe center, spread, position, and frequency.

The mean is the arithmetic average. It is useful when values are reasonably balanced, but it can be pulled by extreme values. The median is the middle value after sorting the data. It often gives a better sense of a typical value when the distribution is skewed. The mode is the most common value or category. It is especially useful for categorical data.

Measures of spread show how much variation exists. Variance measures average squared deviation from the mean. Standard deviation is easier to interpret because it is in the original unit of measurement. Range shows the difference between the smallest and largest values, though it can be dominated by outliers. Interquartile range focuses on the middle half of the data and is often more stable when extreme values exist.

Data visualization and exploration

Visualization helps statistical analysis because it shows patterns that summary numbers can hide. A mean and standard deviation can look ordinary while the chart shows two clusters, a curved relationship, a coding error, or a few extreme observations driving the result.

Histograms show the distribution of numerical values. Bar charts show counts or proportions for categories. Box plots show the median, spread, and potential outliers. Scatter plots show relationships between two numerical variables. Density plots give a smoothed view of a distribution. These charts are not decoration. They are part of checking whether the data behaves the way your planned method assumes.

Exploratory data analysis, often shortened to EDA, is the habit of inspecting data before formal modeling. It includes summaries, charts, missing value checks, distribution checks, and early relationship checks. The point is not to hunt for any pattern that looks exciting. It is to understand the data well enough that later analysis is less blind.

Types of statistical analysis

Statistical analysis is not one type of work. The best category depends on the question. Some analysis describes what is already in front of you. Some analysis tries to draw conclusions beyond the sample. Some analysis predicts. Some analysis explores. Some analysis tests a claim that was defined in advance.

Descriptive statistical analysis

Descriptive statistical analysis summarizes data as it exists. It answers questions such as: What is the average? What is the most common category? How spread out are the values? Which group has the highest rate? What does the distribution look like?

This type of analysis is often underestimated because it seems simple. In reality, poor descriptive work can damage the rest of the analysis. If the distribution is skewed and only the mean is reported, the summary may mislead. If the sample size is small but percentages are presented with too much confidence, the result may look more stable than it is. If missing values are ignored, the summary may describe only the easiest data to collect.

Inferential statistical analysis

Inferential statistical analysis uses sample data to make careful claims about a wider population. This is where sampling, probability, confidence intervals, and hypothesis tests enter the picture. The question changes from “what happened in this dataset?” to “what can this dataset tell us about the population it came from?”

Inference always carries uncertainty. A sample may differ from the population by random chance. It may also be biased because of how it was collected. Inferential statistics gives tools for handling random variation, but it cannot rescue a badly designed study on its own. A precise calculation based on a poor sample can still be poor evidence.

Predictive analysis

Predictive analysis uses patterns in existing data to estimate unknown or future outcomes. A model may predict disease risk, exam performance, equipment failure, hospital readmission, species occurrence, or time to recovery. Regression models are often used for prediction, though machine learning methods may also be used when the main goal is predictive accuracy.

The weakness of predictive analysis is that a model can perform well in old data and poorly in new conditions. Overfitting is a common problem. The model learns details that belong to the training data rather than patterns that hold more widely. That is why validation, test data, and honest performance checks are part of serious predictive work.

Prescriptive analysis

Prescriptive analysis goes one step further and asks what should be done. It may use statistical estimates, forecasts, simulation, optimization, or decision analysis to compare possible actions. A hospital may use it to plan staffing. A research team may use it to choose a sample size. An engineering team may use it to set safety margins. A policy team may use it to weigh expected outcomes under different choices.

This type of analysis depends heavily on assumptions. If the objective is poorly defined, or if the costs and risks are incomplete, the recommended action can look more rational than it is. Prescriptive analysis should make assumptions clear rather than hiding them behind a final recommendation.

Exploratory analysis

Exploratory analysis is used when the goal is to understand the data, discover patterns, generate questions, or find problems before committing to a formal model. It is common at the beginning of research and analysis projects. Analysts look at distributions, relationships, outliers, missing data, group differences, and surprising patterns.

The danger is treating exploratory findings as if they were planned tests. If you search long enough through enough variables, something will often look interesting by chance. Exploratory analysis is useful when it is honest about its role. It can suggest hypotheses, but it should not pretend that every discovered pattern was predicted from the start.

Confirmatory analysis

Confirmatory analysis tests a specific claim or hypothesis that was set before looking at the result. This is common in experiments, clinical trials, and formal research designs. The method, outcome, comparison, and threshold for evidence are usually defined in advance.

The strength of confirmatory analysis is discipline. It reduces the temptation to keep changing the question until the data says something attractive. The weakness is that it can become too narrow if the original question was poorly framed. Good work often uses exploration to understand the data and confirmatory analysis to test the claims that truly need testing.

How statistical methods are used in analysis

Statistical analysis uses different methods at different stages of the work. The purpose of this section is not to list every method in detail. For a broader overview of the main categories of techniques, see our article on statistical methods. Here, the focus is on how those methods function inside an analysis workflow.

Describing the data

Most analysis begins with descriptive methods. These include counts, percentages, averages, medians, standard deviations, frequency tables, and visual summaries. Descriptive work helps the analyst understand the dataset before making broader claims.

This step can reveal missing values, unusual observations, skewed distributions, coding errors, or group imbalances. Skipping this stage can make later results look cleaner than they really are.

Estimating uncertainty

When analysis uses sample data to learn about a wider population, uncertainty becomes central. Estimation methods help answer questions such as: What is the likely population average? What is the likely proportion? How large is the difference between two groups? How precise is the estimate?

Confidence intervals are useful because they show a range of plausible values rather than only one number. A narrow interval suggests greater precision. A wide interval suggests the data does not provide a very tight answer.

Testing claims

Hypothesis testing is used when the analysis needs to evaluate whether the data provides evidence against a default assumption. This is common in experiments, A/B tests, clinical research, quality control, education research, and many other settings.

A statistical test can help assess whether an observed difference or relationship is larger than expected under a specified assumption. However, a test result does not prove importance, causation, or practical value. It must be interpreted alongside effect size, study design, measurement quality, and uncertainty.

Measuring relationships

Many analyses ask whether variables are related. Correlation analysis can show whether two variables tend to move together and whether the relationship is positive, negative, weak, or strong. Scatter plots and other visual checks are important because correlations can be distorted by outliers, curved relationships, and hidden groups.

Correlation is useful as a signal, but it should not be treated as proof of cause and effect. When the question is causal, the analysis must consider design, confounding, comparison groups, timing, and alternative explanations.

Modeling outcomes

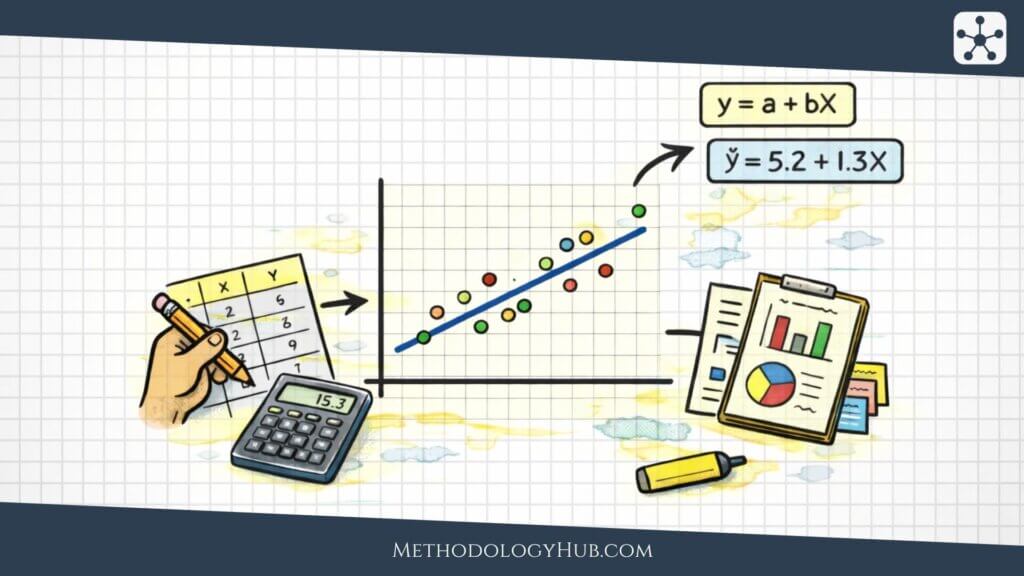

Regression analysis and related models are used when the goal is to model an outcome using one or more predictors. This can help estimate relationships, adjust for other variables, make predictions, or compare groups in a more flexible way.

The outcome type matters. A continuous outcome, such as blood pressure or test score, may call for one type of model. A yes-or-no outcome, such as disease status, needs a different structure. A count outcome, such as number of errors or hospital visits, may require another approach. The method should fit the question and the data, not just the analyst’s habits.

Supporting decisions under uncertainty

Some statistical analysis is used to support decisions where the future is uncertain. In those cases, methods such as risk analysis, scenario analysis, sensitivity analysis, and simulation may be used. The aim is not to remove uncertainty, but to make it visible enough that people can compare options more honestly.

These approaches are useful when one fixed estimate hides too much variation. For example, a project budget, clinical recruitment plan, engineering failure estimate, or public health intervention may depend on several uncertain inputs rather than one certain number.

Choosing the right statistical analysis approach

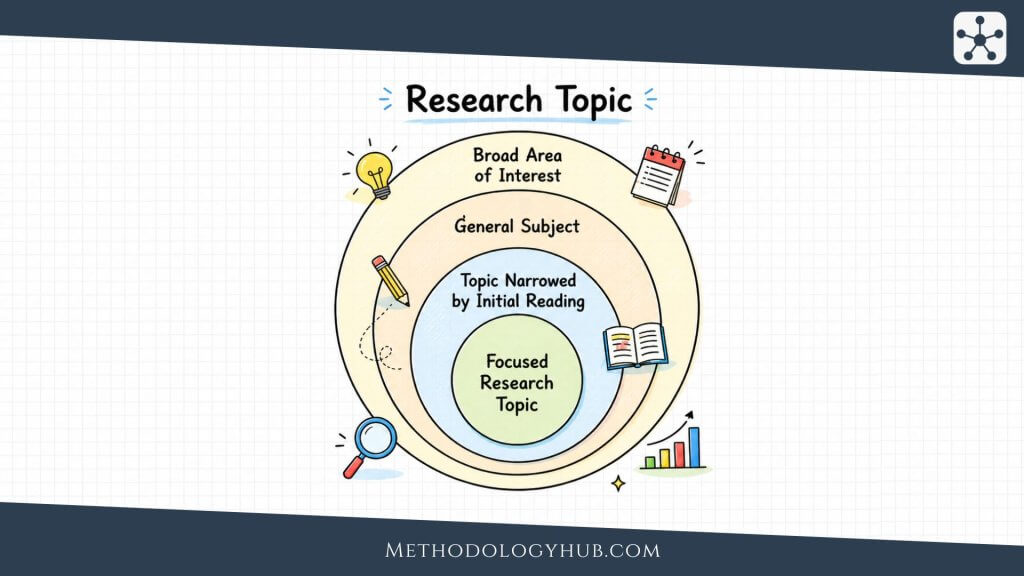

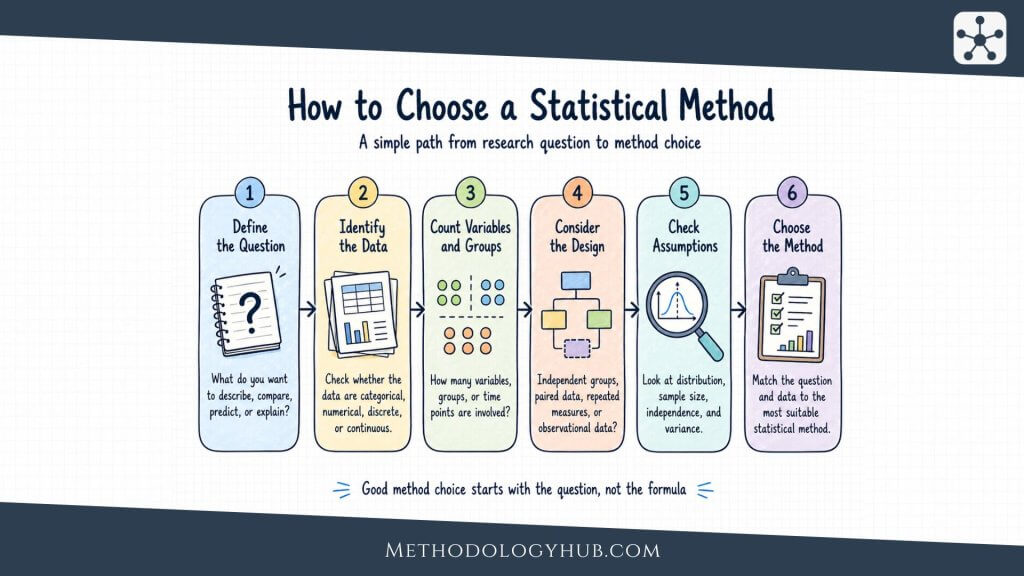

Choosing the right statistical analysis approach starts with the research question, not with the software menu. A common mistake is to begin by asking which test is popular. A better start is to ask what kind of answer you need. Are you describing one dataset? Comparing groups? Testing a claim? Measuring association? Predicting an outcome? Estimating risk? Looking for hidden structure? Trying to support a decision?

Once the question is clear, the next issue is the data. What type of outcome variable do you have? How many groups are involved? Are observations independent? Is the same person measured more than once? Are you working with counts, proportions, times, categories, ranks, or continuous measurements? Is the sample large enough? Are there missing values? Are there strong outliers?

A decision-tree style way to choose an analysis approach

The following decision tree is not a substitute for statistical judgment, but it gives a practical route through common choices. It is useful because it starts with the problem instead of the method name.

Match the approach to the objective

If the objective is description, keep the analysis descriptive. Use measures of center, spread, frequency, and visualization. Do not force a hypothesis test into a situation where the real task is to understand the data.

If the objective is comparison, identify what is being compared. Two means, several means, two proportions, paired measurements, independent groups, and repeated measurements all call for different handling. The design is part of the answer as much as the variable type.

If the objective is relationship analysis, decide whether you need association or modeling. Correlation may be enough for a simple relationship between two variables. Regression is better when you need adjustment, prediction, or a more detailed model.

If the objective is prediction, focus on validation, error, and performance on new data. A model with a small p-value is not automatically a good predictive model. Prediction needs honest testing beyond the data used to build the model.

If the objective is causal inference, the design becomes central. You need to ask how treatment, exposure, or policy assignment happened. Statistical adjustment can help, but it cannot fix every source of bias. Causal claims need a defensible story about what would have happened otherwise.

Check assumptions before trusting the result

Every analysis approach brings assumptions. Some methods assume independent observations. Some assume a certain distribution. Some assume a linear relationship. Some assume equal variances across groups. Some assume proportional hazards, no unmeasured confounding, or a correctly specified model.

Assumption checking is not a ceremonial step at the end. It affects whether the result should be trusted, modified, or replaced with another method. Residual plots, distribution checks, influence diagnostics, missing data analysis, and sensitivity checks can reveal problems that a clean output table hides.

Common errors in method selection

The most common error is choosing a method by habit. Someone learns a t-test and uses it for everything. Someone learns regression and treats every problem as a regression problem. Someone sees non-normal data and panics without asking whether the method is actually sensitive to that issue. Someone applies a complex model to a small dataset and gets an answer that looks sophisticated but rests on very little evidence.

Another frequent error is ignoring dependence. Repeated measurements from the same person, students within the same classroom, patients within the same clinic, and monthly observations over time are not independent in the same way as separate randomly sampled observations. Treating dependent data as independent can make results look more certain than they are.

A third error is confusing statistical significance with usefulness. A tiny effect can become statistically significant in a very large sample. A practically meaningful effect can fail to reach significance in a small study. Method choice should serve the question, not just the hunt for a small p-value.

Interpreting statistical results

Interpreting statistical results is where analysis often either becomes useful or goes wrong. The output may include estimates, standard errors, confidence intervals, p-values, coefficients, odds ratios, model fit measures, residual diagnostics, or classification metrics. The job is not to repeat the table. The job is to explain what the result says, what it does not say, and how strong the evidence is.

Statistical significance

Statistical significance means the observed result would be unlikely under a specified null hypothesis, given the model assumptions and threshold used. It does not mean the result is large, important, causal, or free from bias. A statistically significant result can still be trivial. A non-significant result can still be inconclusive rather than proof of no effect.

Practical significance

Practical significance asks whether the size of the result has real-world value. A training program may improve test scores by a small amount. A medication may reduce symptoms slightly. A teaching intervention may raise test scores by a small amount. Whether that is useful depends on cost, risk, scale, and context.

Effect size

Effect size measures how large a difference or relationship is. It gives substance to a result. Common effect size measures include mean differences, standardized mean differences, odds ratios, risk ratios, correlation coefficients, and regression coefficients. Reporting effect size helps prevent the analysis from becoming a yes-or-no ritual around p-values.

Confidence intervals

Confidence intervals show a range of plausible values under the method used. They help readers see precision. A narrow interval suggests a more precise estimate. A wide interval suggests more uncertainty. Confidence intervals are often more informative than p-values because they show both direction and possible size.

Model assumptions and diagnostics

Model diagnostics check whether the model is behaving reasonably. In linear regression, residual plots may reveal nonlinearity, unequal variance, outliers, or influential cases. In logistic regression, calibration and classification performance may need attention. In time series, autocorrelation can break assumptions. In survival analysis, proportional hazards may need checking.

Diagnostics do not always give a simple pass or fail. They often show where the model is weak, where sensitivity analysis is needed, or where a different model may be more honest.

Clear reporting of results

Clear reporting should state what was measured, which method was used, why that method was appropriate, what the main estimate was, how uncertain it was, and what limitations remain. It should avoid pretending the analysis proves more than the design supports.

Statistical analysis example

Suppose a researcher wants to know whether a new teaching method improves exam scores. The raw data may include students, class groups, pre-test scores, post-test scores, attendance, demographic variables, and final exam results. The statistical analysis would not begin with a software command. It would begin with the question: what exactly counts as improvement?

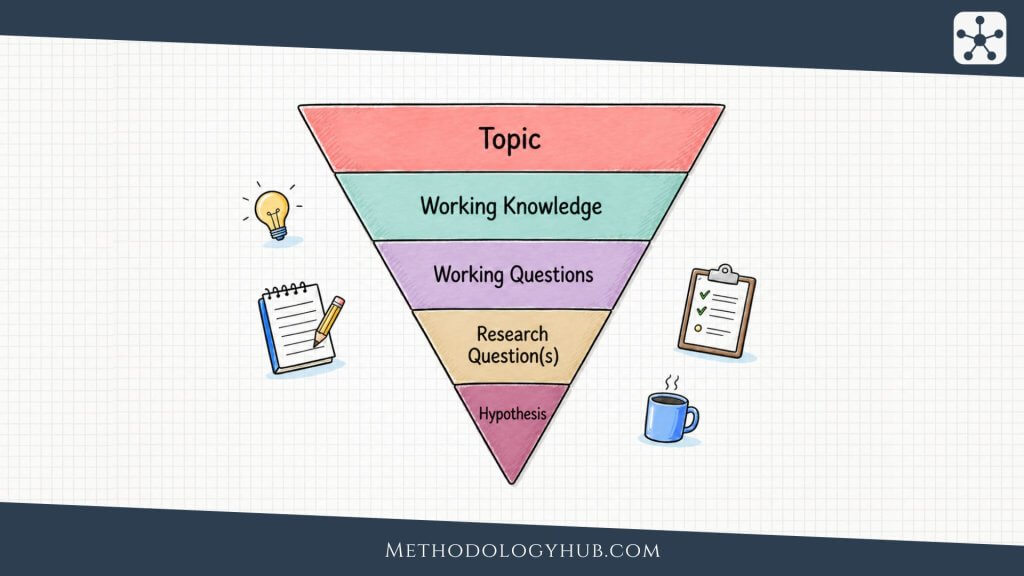

Step 1: Define the question and outcome

The researcher needs to define the outcome before choosing a statistical method. The outcome might be the final exam score, the change from pre-test to post-test, the probability of passing, or the number of points improved. Each outcome leads to a different analysis structure.

Step 2: Inspect the data

The researcher would check the sample size, missing values, unusual scores, group balance, and score distributions. If one class has many more missing results than another, or if the groups started at very different baseline levels, those issues matter before any formal test is interpreted.

Step 3: Summarize the groups

Descriptive statistics would show the average score, median score, spread, and score distribution in each group. Charts may reveal whether the improvement is broad across many students or driven by a few extreme cases.

Step 4: Choose an appropriate method

The method depends on the design. If two independent classes are compared, the analysis is different from a before-and-after study of the same students. If the outcome is a continuous score, one approach may fit. If the outcome is pass or fail, a different approach is needed. If students are grouped within classrooms, the dependence between students in the same class may need attention.

Step 5: Interpret the result in context

The final interpretation should not stop at whether a p-value is below a threshold. The researcher should report the size of the improvement, the uncertainty around it, whether the difference is educationally meaningful, what assumptions were made, and what limitations remain.

A useful way to think about it: statistical analysis is not only calculation. It is the full path from question to data, method, result, interpretation, and limitation.

Tools for statistical analysis

Statistical tools make analysis easier, but they do not replace method choice. The software can calculate quickly. It cannot decide whether your sample is biased, whether your outcome variable is appropriate, whether your model assumptions make sense, or whether the interpretation goes beyond the design.

R is widely used for statistical computing, graphics, and research workflows. It has extensive packages for regression, Bayesian modeling, time series, multivariate analysis, survival analysis, visualization, and reproducible reporting. Python is widely used in data analysis, machine learning, automation, and scientific computing. Libraries such as pandas, NumPy, SciPy, statsmodels, scikit-learn, matplotlib, and PyMC support a broad range of analysis tasks.

SPSS is common in social sciences, education, psychology, and applied research settings where menu-driven analysis is useful. SAS is common in regulated industries, clinical research, and enterprise analytics. Microsoft Excel is widely available and useful for simple summaries, charts, and small analyses, though it becomes risky for complex modeling, reproducibility, and larger workflows.

The tool should match the task and the user. A simple descriptive summary does not need a complex programming environment. A reproducible research project probably does. A regulated analysis may require audit trails and validated workflows. A small classroom example may only need a spreadsheet. The wrong tool is the one that makes the analysis harder to check.

Conclusion

Statistical analysis is useful because data is rarely self-explanatory. It needs to be described, checked, modeled, and interpreted with enough care that the conclusion does not outrun the evidence. Sometimes that means a simple table and a clear chart. Sometimes it means hypothesis testing, regression, causal inference, or simulation. The difficulty is not only knowing the names of the methods. It is knowing which job each method can honestly do.

The best statistical analysis usually begins with plain questions. What are we trying to learn? What kind of data do we have? How was it collected? What assumptions are we making? What would count as a useful answer? What uncertainty remains? Those questions keep the work grounded.

For most readers, the most practical improvement is not to memorize every test. It is to match the analysis to the problem. Use descriptive analysis when the task is summary. Use inference when the task is learning from a sample. Use regression when relationships need modeling. Use causal thinking only when the design can support cause-and-effect claims. Use risk analysis and simulation when uncertainty itself is the thing you need to understand.

Sources and recommended readings

- Vandever, C. “Introduction to Research Statistical Analysis: An Overview of the Basics.”

- Ali, Z., and Bhaskar, S. B. “Basic Statistical Tools in Research and Data Analysis.”

- Kim, J., and colleagues. “Comprehensive Guidelines for Appropriate Statistical Analysis Methods.”

- Campbell, M. J. “Study Design and Choosing a Statistical Test.”

- Idkowiak, J., and colleagues. “Best Practices and Tools in R and Python for Statistical Analysis.”

- Nature Methods. “Statistics for Biologists.”

- Frumento, P. “Understanding Statistical Analysis in Randomized Trials.”

- Boulesteix, A. L., and colleagues. “Introduction to Statistical Simulations in Health Research.”

FAQs on statistical analysis

What is statistical analysis?

Statistical analysis is the process of organizing, summarizing, modeling, and interpreting data to find patterns, answer questions, estimate uncertainty, and support decisions.

What is the difference between statistical analysis and statistical methods?

Statistical methods are the specific techniques used to work with data, such as regression, hypothesis testing, or confidence intervals. Statistical analysis is the broader process of choosing, applying, checking, and interpreting those methods to answer a question.

What are the main types of statistical analysis?

The main types include descriptive analysis, inferential analysis, predictive analysis, prescriptive analysis, exploratory analysis, and confirmatory analysis. Each type serves a different purpose depending on the goal.

When should I use statistical analysis?

Statistical analysis is useful whenever you need to understand data, compare groups, test a claim, identify relationships, estimate uncertainty, or make predictions based on evidence.

What is a p-value in statistical analysis?

A p-value measures how likely it would be to observe a result at least as extreme as the one found if a specified null hypothesis were true. A small p-value suggests the observed result would be unusual under that assumption, but it does not prove practical importance or causation.

How do I choose the right statistical analysis approach?

Start by defining your goal, then examine the outcome type, variable types, number of groups, study design, sample size, assumptions, and missing data. Choose an approach that matches the question and the structure of the data.

What tools are used for statistical analysis?

Common tools include R, Python, SPSS, SAS, and Excel. The right tool depends on the complexity of the analysis, reproducibility needs, regulatory requirements, and the user’s technical comfort.