Data Collection Methods are the procedures researchers use to gather, record, or extract information for a study. A method can be as simple as a structured questionnaire or as technical as a laboratory instrument, sensor, registry extract, or repeated field measurement. The method should fit the research question, the variables, the population, and the kind of evidence the study needs.

This article explains what data collection methods are, how they differ, how to choose among them, and how to plan data collection so the final dataset can be analysed with confidence.

What Are Data Collection Methods?

Data collection methods are the planned ways a researcher obtains information from people, objects, documents, environments, instruments, or existing records. The word “method” does not only mean a tool. It includes who or what is observed, when the information is gathered, how it is recorded, and what rules are followed so that each observation is comparable with the others.

In a laboratory study, data collection may involve measuring temperature, time, concentration, mass, or reaction rate under controlled conditions. In an education study, it may involve test scores, attendance records, writing samples, or responses to a questionnaire. In a health study, it may involve measurements taken by clinicians, patient-reported responses, imaging results, or information extracted from electronic records.

The method is not chosen after the research data arrive. It is part of the design. A study that asks about change over time needs repeated collection points. A study that compares two groups needs the same measure for both groups. A study that estimates prevalence needs a sampling method and a collection method that can reach the target population.

Data collection method, instrument, and source

Three words are often used together, but they do different jobs.

- Data collection method: the general way information is gathered, such as a survey, experiment, observation, test, record extraction, or sensor measurement.

- Instrument: the specific tool used in that method, such as a questionnaire, scale, checklist, laboratory device, form, interview schedule, or extraction template.

- Source: the person, object, document, system, population, or environment from which the information comes.

For example, a study of sleep and reaction time might use a daily sleep diary, a wearable device, and a computer-based reaction test. These are not the same method repeated three times. They are three collection routes that produce different kinds of evidence: self-reported sleep, device-recorded movement, and measured performance.

Data collection methods and the research question

A good method gives the research question a realistic path to evidence. If the question asks how often something occurs, the method must count occurrences. If the question asks whether one condition changes an outcome, the method must measure the outcome before, after, or across conditions. If the research question asks how a variable is distributed in a population, the method must collect comparable observations from a suitable sample.

Weak data collection often starts with a vague research question. A researcher may know the general research topic but not the unit of observation, the variable, the period, or the comparison. The collection plan then becomes too loose. It gathers material, but not necessarily the material needed to answer the question.

What data collection methods do for a study

Data collection methods give structure to the evidence. They help the researcher decide:

- what information will be collected

- which variables will be measured or recorded

- who or what will be included

- how many observations are needed

- how the information will be stored

- which analysis will be possible later

These decisions should be made before collection begins. Changing them midway may be necessary in some projects, but it should be documented. Otherwise, the final dataset may contain values collected under different rules, making comparison harder than it should be.

Types of Data

Before choosing data collection methods, researchers need to know what kind of data they intend to collect. The type of research data affects the instrument, the sampling method, the storage format, and the analysis. It also affects how much detail has to be written into the protocol.

Some studies collect one main type of research data. Others collect several. A clinical study may collect numerical laboratory values, categorical diagnosis codes, patient-reported ratings, and secondary information from records. A field study may collect species counts, GPS points, weather values, and photographs. Each type needs its own collection rules.

Categorical data

Categorical data place observations into groups. The groups may be named categories, ordered categories, or classification labels. Examples include blood type, school type, treatment group, disease status, soil class, employment category, and response options such as “agree”, “neutral”, and “disagree”.

Categorical data collection needs clear labels. If the categories overlap, the data collector may not know where to place an observation. If the categories are too narrow, many cases may fall into an “other” group. If the categories are too broad, the final dataset may hide differences that the study wanted to examine.

Numerical data

Numerical data are recorded as numbers that can be counted, measured, ranked, or calculated. Examples include age, test score, systolic blood pressure, rainfall, time to complete a task, number of errors, and concentration of a substance.

Numerical data need units. A value of 12 is not enough unless the dataset says whether it means 12 seconds, 12 months, 12 kilograms, 12 points, or 12 observations. Instruments should also record the level of precision. Measuring length to the nearest centimetre is different from measuring it to the nearest millimetre.

Discrete data

Discrete data take countable values. They often answer “how many” questions. Examples include the number of visits, number of correct answers, number of seedlings, number of hospital admissions, or number of pages in a document set.

Discrete data collection should define the counting rule. For example, a study that counts visits must decide whether cancelled appointments count, whether virtual visits count, and whether repeat visits on the same day are counted once or more than once.

Continuous data

Continuous data can take values along a scale. Examples include temperature, height, weight, distance, time, pH, enzyme activity, and voltage. In practice, the measuring instrument limits the number of recorded values, but the concept is still continuous.

Continuous data collection needs calibration, units, and timing. If two instruments are used, the study should record whether they give comparable readings. If measurements change quickly, the time of measurement may be as important as the value itself.

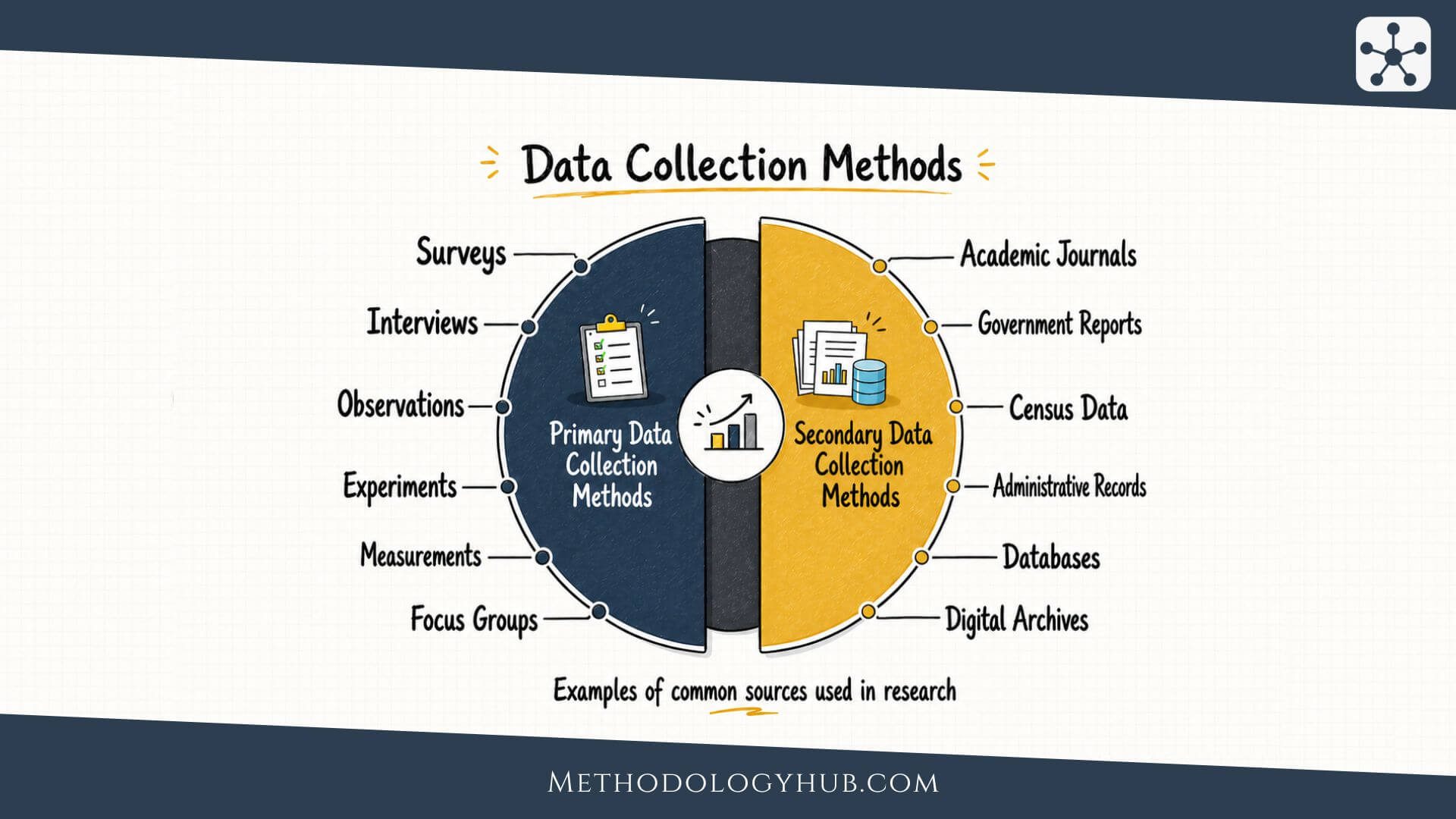

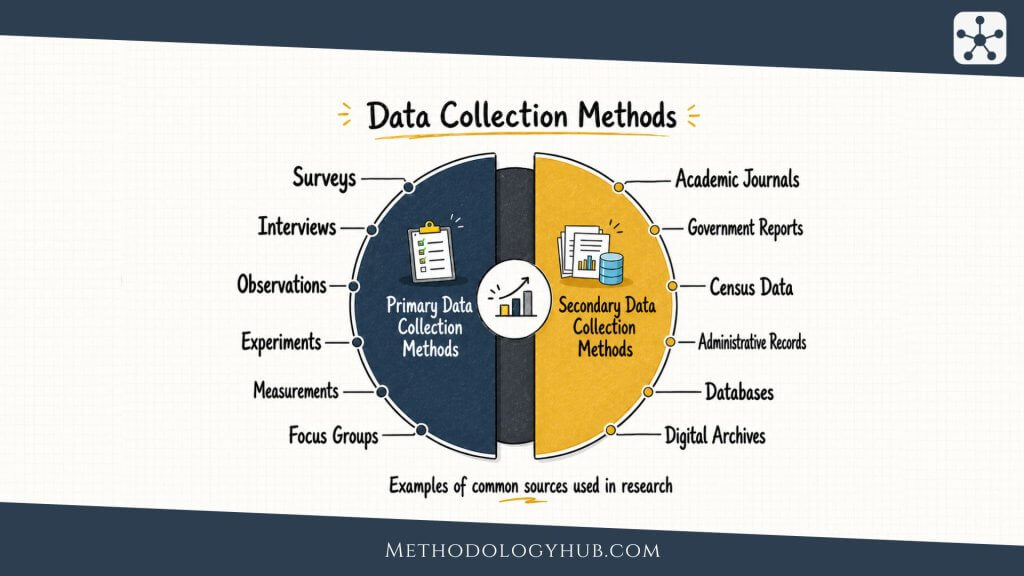

Primary data and secondary data

Primary data are collected for the current study. The researcher designs the instrument, chooses the source, defines the variables, and controls the collection process as far as the setting allows. Examples include new questionnaire responses, laboratory readings, field observations, and test results collected for a specific project.

Secondary data already exist before the current study begins. They may come from public datasets, administrative records, registries, archives, previous studies, maps, court records, census materials, or institutional databases. These sources can save time, but the researcher must check how the information was originally collected.

Objective and subjective data

Objective data are recorded through procedures that depend less on personal judgement. Examples include a blood test result, exam score, weight recorded on a calibrated scale, GPS coordinate, or timestamp from a system log.

Subjective data come from perception, judgement, rating, or self-report. Examples include pain rating, perceived workload, satisfaction with a service, confidence in a task, or a teacher’s rating of classroom behaviour. Subjective data can be useful, but the instrument must make the response task clear.

Variables, observations, and the dataset

A variable is a feature that can vary across observations. An observation is the unit being recorded: a person, sample, school, animal, laboratory trial, field plot, article, document, or event. A dataset is the organised collection of those observations and variables.

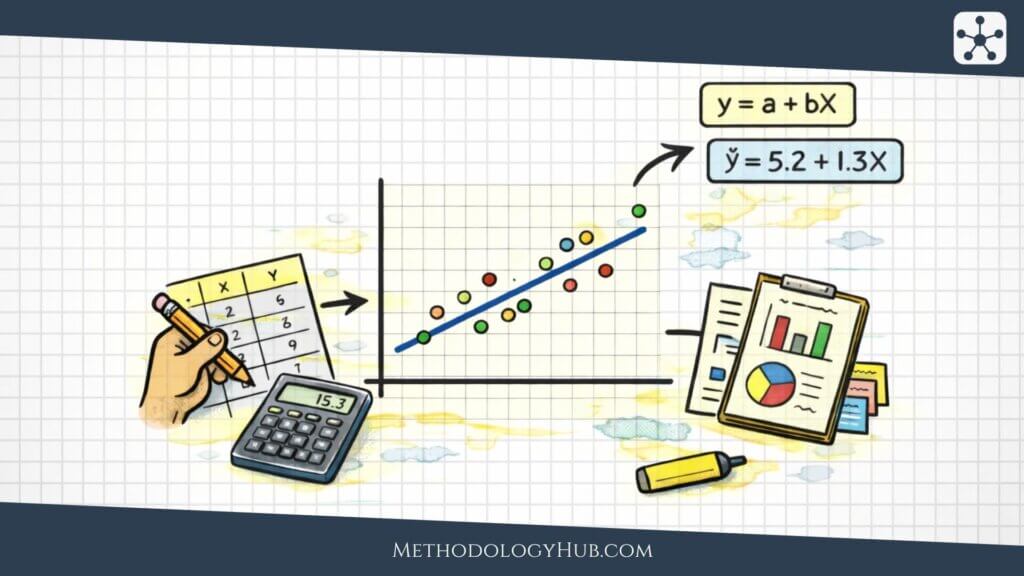

Many studies distinguish between independent and dependent variables. An independent variable is the condition, exposure, group, or predictor that the study examines. A dependent variable is the outcome or response that may change. In a study of fertilizer dose and plant height, fertilizer dose is the independent variable and plant height is the dependent variable. Other variables, such as soil type and sunlight, may need to be recorded because they can affect the outcome.

Data collection should make these roles visible. If the variables are not defined before collection, the dataset may contain notes that are interesting but difficult to analyse. The researcher may then have to recode, combine, or discard information that could have been collected more cleanly from the start.

Main Data Collection Methods

The main data collection methods include surveys, questionnaires, experiments, structured observation, tests, measurements, interviews, focus groups, document review, record extraction, sensors, and administrative data retrieval. Some are better for measuring variables across many cases. Others are better for obtaining detailed information from fewer cases or for recording what happens in a setting.

The same method can also look different across disciplines. Observation in ecology is not the same as observation in a classroom. A test in psychology is not the same as a laboratory assay in chemistry. The shared point is that each method needs a rule for what counts as research data.

Surveys and questionnaires

Surveys collect information from respondents using a set of questions. A questionnaire is the instrument that contains those questions. Surveys can be delivered online, on paper, by telephone, or in person. They are useful when the same information must be collected from many participants in the same format.

A strong questionnaire uses clear wording, consistent response options, and an order that does not confuse respondents. Closed questions are easier to code and compare. Open questions can capture wording that fixed options might miss, but they require a plan for how responses will be organised later.

Survey data can include categorical variables, numerical ratings, frequencies, dates, rankings, and self-reported behaviours. The researcher should decide in advance how each answer will appear in the dataset. For example, a five-point rating scale should have a documented code for each option.

Experiments and controlled measurements

Experiments collect data under conditions set by the researcher. The study may assign participants, samples, plots, or materials to different conditions and measure the outcome. Experiments are common in laboratory research, clinical research, education research, agriculture, psychology, engineering, and many other fields.

Controlled measurements need a written protocol. The protocol should state the condition, timing, instrument, unit, order of procedures, and rule for repeating or excluding a measurement. Without that detail, small differences in procedure can create differences in the data.

Experiments often involve independent and dependent variables. The independent variable may be a treatment, dose, condition, exposure, temperature, instruction, or material. The dependent variable may be a score, time, rate, physiological reading, yield, concentration, or performance measure.

Observation and field recording

Observation collects information by watching, counting, measuring, or recording events in a setting. It may be used in classrooms, clinics, workplaces, public spaces, natural habitats, archaeological sites, or laboratories. Observation can be open-ended, but research observation usually needs a structured form or a clear field protocol.

A structured observation form may record the time, place, event type, actor, duration, frequency, and context. In environmental studies, it may record weather, location, species, transect, plot, and measurement conditions. In education studies, it may record task type, teacher action, student response, and timing.

Observation works best when the researcher defines the event before collection. If the event is “student participation”, the protocol should say whether raising a hand, speaking without being called, writing in a shared document, or helping another student counts.

Interviews

Interviews collect information through spoken or written questions answered by participants. They can be tightly structured, partly structured, or open. In a structured interview, each participant receives the same questions in the same order. That makes responses easier to compare. In a more flexible interview, the researcher can ask follow-up questions, but the answers may be harder to code into a dataset.

Interviews are useful when the researcher needs explanations, timelines, definitions, or accounts that cannot be captured with fixed response options. They also help when the study population is small, specialised, or difficult to reach with a standard survey.

An interview protocol should include the question order, prompts, recording plan, note-taking rules, and a way to label each participant without exposing identity in the working dataset.

Tests and assessments

Tests and assessments collect data by asking participants to complete tasks, answer items, perform actions, or respond to stimuli. They are used in education, psychology, medicine, language research, sports science, and human performance research.

A test may produce a total score, subscale score, pass/fail outcome, response time, error count, or classification. The researcher should document how scores are calculated. If several raters score the same work, the study should define the scoring rubric and check consistency across raters.

Document and record extraction

Document and record extraction uses existing materials as data sources. Examples include medical records, court files, policy documents, laboratory notebooks, student records, archives, meeting minutes, maps, photographs, and institutional reports.

This method needs an extraction form. The form states which fields will be taken from each source, how missing information will be coded, and what to do when a source contains conflicting values. Extraction also needs a record of where each value came from, so the researcher can trace errors later.

Sensors, instruments, and automated logs

Some data are collected by instruments or systems with little manual entry. Examples include accelerometers, temperature probes, air quality monitors, GPS trackers, laboratory analysers, imaging systems, learning platforms, and software logs.

Automated collection can reduce some forms of human error, but it creates other tasks. The researcher must check calibration, sampling frequency, device placement, file formats, timestamps, battery life, missing intervals, and data export rules. A sensor can collect thousands of values, but those values still need a method.

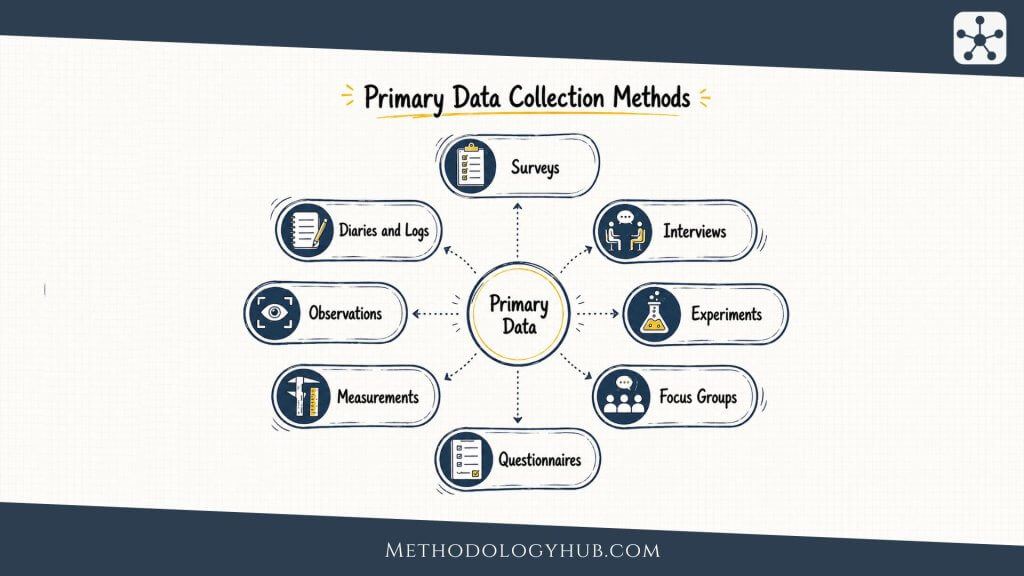

Primary Data Collection Methods

Primary data collection methods are used when the researcher gathers new data for the current study. This gives more control over variables, instruments, timing, and collection conditions. It also requires more planning, because the researcher is responsible for the quality of the collection process.

Primary data are useful when existing sources do not contain the right variables, use the wrong definitions, lack the needed population, or were collected at the wrong time. A researcher studying current laboratory performance, field conditions, student responses, or patient outcomes may need to collect new information rather than rely on existing records.

When primary data are suitable

Primary collection is suitable when the study needs information that does not already exist in a usable form. It is also suitable when the researcher needs standardised procedures across all observations. For example, if a study compares reaction time under three sound conditions, the researcher cannot usually take those values from a public dataset. The study must collect them under controlled conditions.

Primary collection can also be useful when the researcher needs a specific population. A university study of first-year chemistry students may need information from that exact group during the current semester. Older national datasets may not answer that local question.

Planning a primary collection instrument

The instrument should be built from the variables. A common mistake is to write interesting questions first and then decide what they measure later. It is better to list the variables, define each one, decide the measurement level, and then write the item, task, or procedure that will capture it.

A simple planning table can help:

- Variable: weekly study time

- Type: numerical, continuous or grouped

- Source: student self-report

- Instrument item: “During the last seven days, how many hours did you spend on independent study for this course?”

- Dataset format: number of hours, recorded to one decimal place

This small amount of planning reduces confusion later. It also shows whether an item is too broad, too vague, or unlikely to produce the value the analysis needs.

Pilot testing

A pilot test is a small trial of the collection method before the main study. It can reveal unclear questions, missing response options, long completion time, instrument problems, file export errors, or procedures that data collectors interpret differently.

A pilot does not need to be large to be useful. Even a few participants, records, samples, or field trials can show whether the collection plan works. The point is not to produce final results. The point is to find avoidable problems before they affect the main dataset.

Training data collectors

When more than one person collects data, training is part of the method. Data collectors need the same definitions, forms, examples, and decision rules. If one observer counts an event and another observer ignores it, the dataset will reflect differences between collectors rather than differences in the study setting.

Training may include practice cases, calibration exercises, scoring examples, mock interviews, instrument checks, or supervised field collection. The study should keep a short record of training dates, materials, and any changes made after practice sessions.

Strengths and limits of primary data collection

Primary data can be closely matched to the research question. The researcher can define the variables, select the population, and decide how the information will enter the dataset. The limits are time, cost, access, and burden on participants or data collectors.

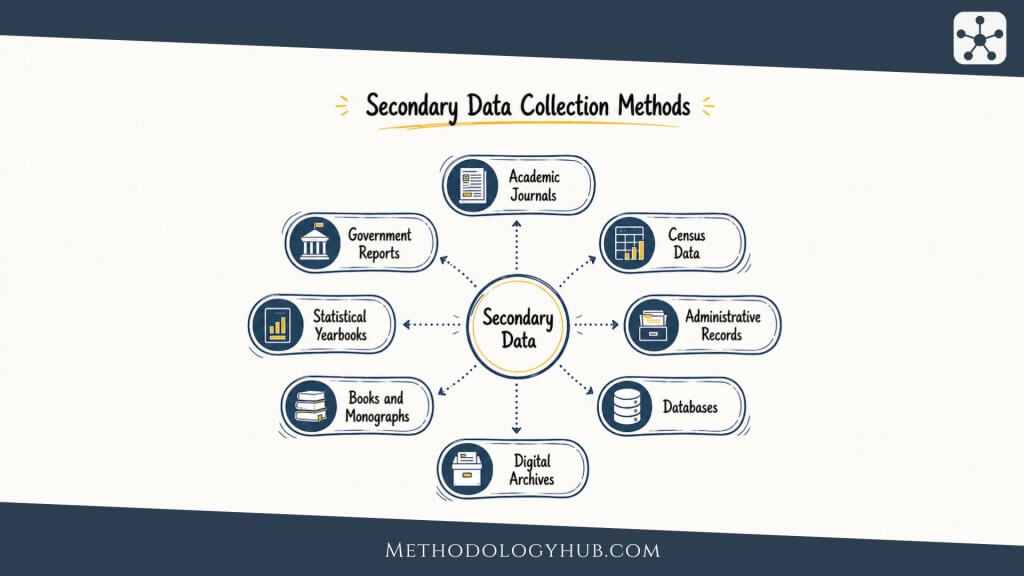

Secondary Data Collection Methods

Secondary data collection methods use information that was collected before the current study. The researcher does not create the original data, but selects, extracts, cleans, and analyses data from existing sources. This can be efficient, especially when the source covers many people, long time periods, rare events, or large geographic areas.

Secondary data are common in epidemiology, economics, education, public policy, history, environmental science, demography, and data science. They can come from government datasets, registries, archives, institutional databases, published supplementary files, academic journals, research databases and repositories, and previous research projects.

When secondary data are suitable

Secondary data are suitable when an existing source contains the variables, population, period, and level of detail needed for the question. For example, a researcher studying long-term temperature patterns may use meteorological records rather than collect new measurements for decades. A researcher studying graduation rates may use institutional records if the definitions and coverage are clear.

The fit should be checked carefully. A large dataset is not automatically useful. It may lack the outcome variable, use a different age range, combine categories that the study needs to separate, or collect values at intervals that are too wide for the analysis.

Checking the original collection process

Secondary data must be judged by how they were first collected. The researcher should look for documentation, codebooks, sampling, instrument descriptions, missing data codes, date ranges, changes in definitions, and known limitations.

Important questions include:

- Who collected the original data?

- For what purpose were the data collected?

- Which population or units were included?

- Which variables were recorded, and how were they defined?

- Were any definitions changed during the collection period?

- How are missing, unknown, or not applicable values coded?

- Can the data be linked to other sources without changing the unit of analysis?

Extracting data from records and archives

Record extraction should be planned like primary collection. The researcher should use an extraction template that names each field, source location, coding rule, and allowed value. This is especially important when records are not standardised or when the same information may appear in several places.

If two researchers extract the same records, they should compare a sample of their extracted values. Differences can show that a definition is unclear or that the source is harder to interpret than expected.

Combining secondary datasets

Combining datasets can add useful variables, but it can also create errors. The researcher should check identifiers, time periods, geographic boundaries, measurement units, coding systems, and duplicate records. A school code, hospital code, or region name may change over time. A variable with the same label in two files may not have the same definition.

When data are merged, the final dataset should keep a record of source files, merge keys, date of access, and decisions made during cleaning. This helps another researcher understand how the analytic file was built.

Strengths and limits of secondary data collection

Secondary data can be broad, cost-efficient, and useful for long-term or large-scale questions. It can also reduce the need to collect information again from people or environments that have already been studied.

The limits are control and fit. The researcher inherits the original collection choices. If the source used unclear definitions or missing values are common, the analysis may be limited. A secondary source can support a study well only when its documentation is strong enough to judge.

How to Choose Data Collection Methods

Choosing data collection methods means matching the research question with the type of evidence needed. The best method is not the one that sounds most advanced. It is the one that can collect the right data with the least unnecessary error.

The choice usually depends on the question, variables, population, setting, resources, time frame, and planned analysis. A method that works well for one study can be unsuitable for another. A survey may be appropriate for estimating reported study habits in a large student group. It may be weak for measuring actual reaction time, which needs a task or instrument.

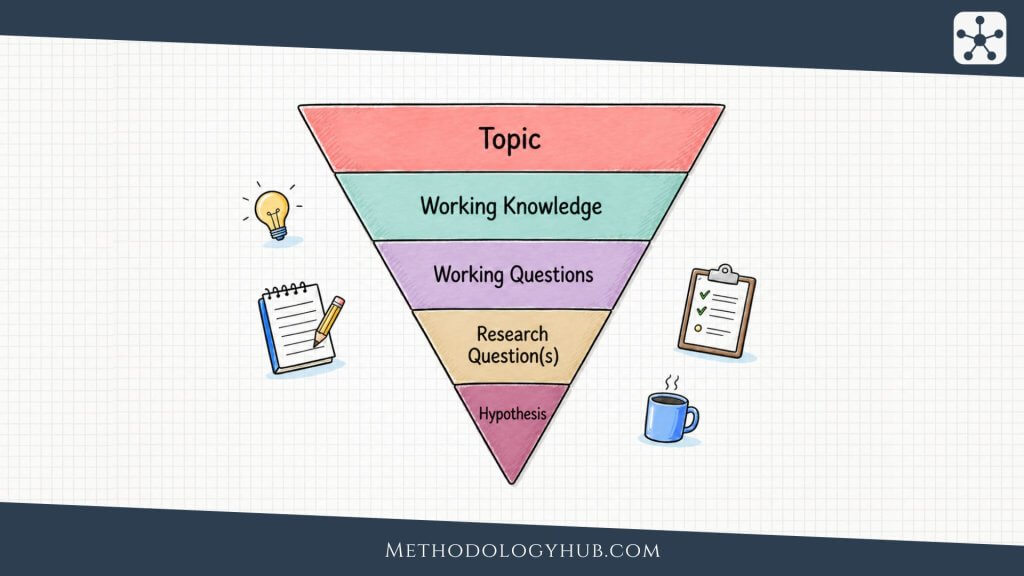

Start with the research question

Write the question before choosing the method. Then underline the parts that need evidence. If the question asks, “Does weekly retrieval practice improve final test scores among first-year biology students?” the collection plan needs at least a condition or exposure, a defined student group, and a final test score. It may also need prior achievement, attendance, or completion data.

If the question asks, “How accurately do two devices measure resting heart rate during seated recovery?” the collection plan needs both devices, the timing of readings, the posture, the recovery period, and a comparison standard if one is available.

Match the method to the variable

Each variable needs a collection route. Some variables are directly measured. Some are reported by participants. Some are extracted from records. Some are calculated from several fields.

- Age: questionnaire item, record extract, or calculated from date of birth.

- Blood pressure: instrument reading under a defined procedure.

- Exam performance: official score, standardised test, or scored task.

- Task completion: platform log, checklist, or observer record.

- Soil moisture: field sensor, laboratory test, or manual measurement.

The same concept can have several possible measures. The researcher should choose the one that fits the analysis and the setting. A self-report item may be enough for some questions, while an instrument reading is needed for others.

Decide the unit of analysis

The unit of analysis is the level at which data will be analysed. It may be an individual person, classroom, school, hospital, field plot, specimen, document, country, event, or time point. The data collection method must gather information at that level.

Problems occur when the unit of collection and the unit of analysis do not match. A study may collect school-level averages but try to make claims about individual students. Or it may collect individual-level observations but draw conclusions about entire institutions without enough institutional data.

Consider timing

Timing is part of the method. Some data are collected once. Some are collected before and after an intervention. Some are collected repeatedly over weeks, months, or years. Some are collected continuously by devices.

The timing should match the expected change. If an outcome changes slowly, daily collection may be unnecessary. If it changes quickly, a single end-point measurement may miss the pattern.

Check feasibility without weakening the design

Feasibility includes access, time, staffing, equipment, participant burden, and data management. It is better to choose a simpler method that can be carried out consistently than a complex method that breaks down halfway through collection.

Feasibility should not become an excuse for poor measurement. If the question needs a measured outcome, a vague self-report question may not be enough. In that case, the researcher may need to narrow the question, reduce the sample, change the setting, or use secondary data.

Use more than one method when each has a clear job

Some studies use several methods. This can improve coverage, but only when each method has a defined purpose. A study may use official records for attendance, a questionnaire for study habits, and a test for performance. That combination is useful because each method collects a different variable.

Using several methods without a plan can create extra data that are difficult to connect. More data are not automatically better. The collection plan should say how each method answers a part of the question.

Data Collection Process

The data collection process turns a research plan into a usable dataset. It begins before the first value is recorded and continues until the data are stored, checked, and ready for analysis. A careful process does not make results automatic, but it makes the evidence easier to trust and easier to examine.

Step 1: Define the research question and objectives

Start by writing the research question and the specific objectives. The objectives should name what the study will describe, compare, estimate, test, or measure. If the objectives are broad, the collection plan will also be broad.

For example, “study student learning” is too wide for a collection plan. “Compare final test scores between students assigned to weekly retrieval practice and students assigned to weekly rereading” is ready for variables and procedures.

Step 2: Identify variables and data types

List each variable. Then define its type, source, unit, allowed values, and role in the study. This is where independent and dependent variables should be named if the study uses that structure. It is also where demographic, background, grouping, or control variables can be defined.

A variable list should be practical. It should include variables that the study will actually use, not every possible detail that might be interesting.

Step 3: Choose the data collection methods

Select the methods that can produce the required variables. A study may need a survey for self-reported study time, a test for performance, and administrative records for course enrolment. Another study may need instrument readings and field notes.

Write down the reason for each method. This helps remove methods that do not have a clear job.

Step 4: Develop instruments and forms

Build the questionnaire, checklist, measurement sheet, extraction form, scoring rubric, or device protocol. The instrument should use the same variable names and formats that will appear in the dataset. This reduces recoding work and prevents ambiguity.

Forms should also include practical fields such as date, time, site, collector ID, source ID, instrument version, and notes for unusual cases.

Step 5: Select the sample or cases

The sample is the set of people, records, specimens, places, documents, events, or time points included in the study. The sampling plan should say who or what is eligible, how cases are selected, how many are needed, and what happens when a case is unavailable.

Sampling decisions affect interpretation. A convenience sample from one course, clinic, or field site may be suitable for some questions, but it should not be described as if it represents a much wider population.

Step 6: Pilot the method

Test the procedure on a small scale. Check whether participants understand questions, instruments work, observers apply definitions consistently, records contain the expected fields, and files export correctly.

After the pilot, revise the instrument or protocol if needed. Keep a note of the changes. A small revision before main collection can prevent a large cleaning problem later.

Step 7: Collect the data

Collect the data according to the written procedure. Record deviations as they occur. If a device fails, a participant skips an item, or a record is incomplete, the collection form should show what happened rather than hide it.

During collection, the researcher should monitor the data flow. Waiting until the end can allow repeated errors to continue unnoticed.

Step 8: Store and protect the data

Data should be stored in a stable format with clear file names, version control, access rules, and backup procedures. Personal identifiers should be separated from the working dataset when possible. A codebook should explain variable names, labels, units, and missing data codes.

Good storage is not only a technical issue. It helps the researcher remember what was collected and how each value should be understood.

Step 9: Check the dataset before analysis

Before analysis, check the dataset for impossible values, duplicates, missing values, inconsistent codes, out-of-range measurements, date errors, and unexpected patterns. These checks do not replace analysis. They make analysis possible.

Keep a record of cleaning decisions. If a value is corrected, removed, or recoded, the reason should be clear. The final dataset should not be a mystery even to the person who created it.

Data Quality in Data Collection Methods

Data quality depends on whether the collected values are accurate enough, consistent enough, complete enough, and suitable for the research question. Data collection methods affect all of these. Poor collection cannot always be repaired during analysis.

Quality is not a single property. A dataset can be complete but inaccurate. It can be accurate for one variable and weak for another. It can be large but poorly documented. The collection plan should define what quality means for the study before data are gathered.

Validity

Validity asks whether the method measures what it is supposed to measure. If a study wants to measure physical activity, a single question about gym attendance may miss walking, cycling, manual work, and sport. The method may be easy, but it may not measure the intended concept well.

Validity improves when variables are defined carefully, instruments match the concept, and procedures are tested before use. It also improves when the researcher avoids stretching one measure to represent more than it can support.

Reliability

Reliability refers to consistency. A reliable measurement procedure gives similar results under similar conditions, assuming the thing being measured has not changed. Reliability can involve instruments, observers, raters, forms, or repeated measurements.

For example, if two raters score the same writing sample using the same rubric, their scores should be close enough for the study’s purpose. If a scale gives different readings for the same object within minutes, it may need calibration or replacement.

Measurement error

Measurement error is the difference between the recorded value and the true value or best available reference value. It can come from instruments, respondents, observers, timing, environment, wording, or data entry.

Some error is random. It adds noise. Some error is systematic. It pushes values in one direction. For example, a scale that is not zeroed may overestimate all weights. A question that encourages socially acceptable answers may shift responses away from actual behaviour.

Bias in collection

Bias occurs when the collection process makes some values more likely than others in a way that distorts the evidence. Sampling bias may occur if the study misses part of the target population. Response bias may occur if some people are less likely to answer. Observer bias may occur if an observer expects one group to perform better and scores borderline cases differently.

Bias cannot always be removed, but it can often be reduced. Clear sampling rules, standardised instruments, training, blinding where possible, and careful documentation can help.

Missing data

Missing data are common. A participant may skip an item, a device may fail, a record may lack a field, or a sample may be unusable. The collection plan should define missing value codes and distinguish between “not asked”, “not applicable”, “unknown”, and “missing by error”.

Using one blank cell for all missing situations creates confusion. Different missing reasons may require different treatment during analysis.

Documentation

Documentation is part of data quality. A dataset without a codebook is difficult to use, even if the values are correct. Documentation should explain variable names, labels, units, value codes, missing codes, collection dates, source files, instrument versions, and cleaning decisions.

Good documentation also protects the researcher from relying on memory. A project that lasts several months can produce many small decisions. Those decisions should be written down while they are still clear.

Conclusion

Data collection methods shape the quality of a study from the beginning. A clear research question helps decide whether the study needs surveys, experiments, observations, interviews, existing records, digital traces, documents, measurements, or a combination of methods. The method should fit the type of data needed, the variables being studied, the population or material under investigation, and the level of accuracy required.

Good data collection is planned before the main analysis begins. Researchers need to define what will be collected, from whom or from what source, when it will be collected, and how it will be recorded. A dataset is only useful when the data inside it are consistent, relevant, and connected to the research aim. Careful planning also makes it easier to compare categorical, numerical, discrete, continuous, primary, secondary, objective, and subjective data in a meaningful way.

Sources and Recommended Readings

The following scientific publications and academic reference works discuss data collection methods, method selection, measurement, and data collection in research settings.

- Design: Selection of Data Collection Methods – A peer-reviewed methods article on selecting collection approaches in health professions education research.

- Data Collection Methods and Tools for Research – A scholarly article reviewing data collection methods, tools, and research planning steps.

- Research Data Collection Methods – An academic article indexed on JSTOR discussing data collection in research practice.

- Data Collection Methods – A SAGE academic book chapter from Understanding the Research Process.

- Data Collection Methods – A Springer reference entry from the Encyclopedia of Quality of Life and Well-Being Research.

- Data-collection methods – A Cambridge University Press chapter on using multiple data collection methods in fieldwork.

- Data Collection Methods – A Taylor & Francis academic chapter on data collection methods in applied research settings.

- Data collection methods for studying pedestrian behaviour – A scientific review of collection methods used in pedestrian behaviour research.

- Comparison of 3 data collection methods for gathering sensitive and less sensitive information – A peer-reviewed comparison of three data collection methods in health research.

- Acceptability of Active and Passive Data Collection Methods for Detection of Cognitive Change in Older Adults – A peer-reviewed article on active and passive data collection methods in health research.

FAQs on Data Collection Methods

What are data collection methods?

Data collection methods are planned procedures for gathering, recording, measuring, or extracting information for research. Examples include surveys, experiments, observation, tests, interviews, focus groups, record extraction, sensors, and secondary datasets.

What are the main types of data collection methods?

The main types include primary data collection methods, where new data are collected for the study, and secondary data collection methods, where existing records, datasets, archives, or publications are used. Methods can also be grouped by source, such as surveys, measurements, observations, documents, records, and devices.

How do you choose the best data collection method?

Choose the method by starting with the research question, defining the variables, identifying the unit of analysis, deciding the timing, checking available sources, and confirming that the collected data will support the planned analysis.

What is the difference between primary and secondary data collection?

Primary data collection gathers new information for the current study. Secondary data collection uses information that already exists, such as public datasets, records, registries, archives, or data from earlier studies.

What is a dataset in data collection?

A dataset is an organised collection of observations and variables. Each row often represents one observation, such as a participant, record, sample, document, or time point. Each column usually represents one variable.

What are independent and dependent variables in data collection?

An independent variable is the condition, exposure, group, or predictor the study examines. A dependent variable is the outcome or response that may change. Data collection should define both before values are gathered.